Automated Builds – Part 4: Copy files to container

Sometimes it is needed to copy the files to the docker container before compiling the extension. For example, if the solution uses some custom DotNet libraries such a step would be necessary. In this part of the series, I will show how to handle such cases.

This step is not needed in the build pipeline if the solution does not need any additional files. But anyway I think it is good to see what are the possibilities.

Adding setup

Since I do not want to have hardcoded list of files in the PowerShell script I extended settings.json file created in the previous article with new values. To the settings, I added below array which stores all files to be copied.

- ContainerAddtionalFiles – this variable should be declared as an array of the multiple file descriptions

- FileName – the description of the file which will be copied to the container

- FilePathInLocal – the path to the file on the build server with the file which will be copied (the path should be visible from the build server). Remember to put paths with double “\”

- FilePathInContainer – the path where the file should be placed in the docker container. Remember to put paths with double “\”

Example of settings.json for two files can look like this:

“ContainerAddtionalFiles”: [

{

“FileName”: “Microsoft.Dynamics.Nav.DocumentService.dll”,

“FilePathInLocal”: “c:\\temp\\Microsoft.Dynamics.Nav.DocumentService.dll”,

“FilePathInContainer”: “C:\\Program Files\\Microsoft Dynamics NAV\\130\\Service\\Add-ins”

},

{

“FileName”: “Microsoft.Dynamics.Nav.DocumentService.Types.dll”,

“FilePathInLocal”: “c:\\temp\\Microsoft.Dynamics.Nav.DocumentService.Types.dll”,

“FilePathInContainer”: “C:\\Program Files\\Microsoft Dynamics NAV\\130\\Service\\Add-ins”

}

]

Step in the pipeline

Here you can find the PowerShell script which will copy the files from the local (stored on a build server) path to the container: https://github.com/mynavblog/AzureBuildPipeline/blob/master/BuildScripts/CopyFilesToDockerContainer.ps1

As you can see it checks the settings and then in the loop copy the files. In the end, it also restarts the container. This is because the libraries need to be visible from Business Central before the solution will be compiled.

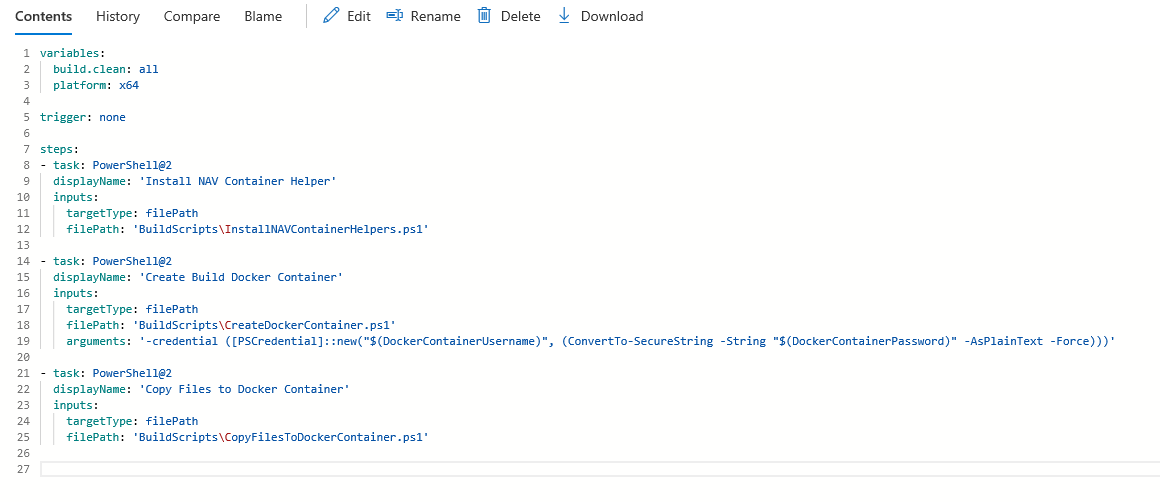

In the end, it is needed to add a new step in the pipeline. Edit the YAML file created before and add a new task.

– task: PowerShell@2

displayName: ‘Create Build Docker Container’

inputs:

targetType: filePath

filePath: ‘BuildScripts\CreateDockerContainer.ps1’

arguments: ‘-credential ([PSCredential]::new(“$(DockerContainerUsername)”, (ConvertTo-SecureString -String “$(DockerContainerPassword)” -AsPlainText -Force)))’

At this point, the YAML file should look similar to one on the screen.

In next article I will show how to compile and publish single extension to the docker container.

“CopyFilesToDockerContainer.ps1” link not working,

https://github.com/mynavblog/AzureBuildPipeline/blob/master/BuildScripts/CopyFilesToDockerContainer.ps1

Thx for info. I just corrected.

“CopyFilesToDockerContainer.ps1” link not working,

Hi. Thx for comment.please check here https://github.com/mynavblog/AzureBuildPipeline I made some changes to files structure and it for sure why.